SEO Has Never Been More Valuable. Or More Difficult.

Over the past decade, search engine optimization has quietly transformed from a technical marketing specialty into one of the most strategically important disciplines in digital business. What was once treated as a tactical function handled by junior marketers has evolved into a field that sits at the intersection of data science, product strategy, editorial judgment, reputation management, and revenue generation. In 2026, the gap between an average SEO and an elite search strategist is no longer marginal. It can determine whether a business captures millions of dollars in organic demand or disappears behind competitors in an increasingly crowded digital landscape.

Part of this transformation comes from the changing nature of search itself. Traditional search engine results once offered ten blue links and a relatively predictable hierarchy of rankings. Today, the search experience is far more complex. AI-generated answers, knowledge panels, entity recognition systems, and increasingly conversational search queries have dramatically reduced the amount of visibility available to most websites. In many cases, there is effectively only one winner. Whether it appears as the top organic result, a cited source in an AI response, or a trusted entity recognized by search engines, a single brand often captures the majority of attention while others receive little visibility at all.

This shift has raised the stakes for businesses that rely on search visibility. Winning search today requires more than optimizing a page for keywords or building a handful of backlinks. It requires understanding how search engines evaluate expertise, how entities and brand reputation influence ranking decisions, how links function as credibility signals, and how content ecosystems build long-term authority around complex topics. The professionals capable of navigating this landscape are rare, and the businesses that employ them increasingly treat search expertise as a strategic investment rather than a marketing experiment.

At the top of the field, the economics reflect this reality. Elite search strategists who can consistently deliver measurable growth in organic visibility are among the most valuable operators in digital marketing. In the most competitive industries, the difference between a mediocre SEO strategy and an exceptional one can be worth millions of dollars in revenue over time. It is not unusual for the very best SEO professionals to command salaries and consulting fees that exceed one million dollars per year when their work directly drives that level of business impact.

Yet despite the rising importance of search, many companies still misunderstand what SEO actually involves. They focus on superficial metrics, underestimate the complexity of the discipline, or assume that cheaper providers can deliver the same results as the most experienced practitioners. In reality, modern SEO has become one of the most sophisticated and competitive areas of digital marketing.

Understanding why requires looking beyond tactics and into the deeper mechanics of how search visibility is earned.

TL;DR

Search engine optimization has evolved into one of the most complex and competitive disciplines in digital marketing. The professionals who consistently succeed in this field are not simply technicians who understand keyword research or backlinks. They operate as hybrid strategists who combine data analysis, editorial thinking, technical expertise, and reputation building into a coherent growth system.

This article argues that the difference between average and elite SEO professionals has widened dramatically as search engines increasingly rely on signals such as entity reputation, topical expertise, and trusted citations to evaluate information. As a result, businesses that invest in world-class search strategy often capture disproportionate value from organic demand, while competitors relying on outdated tactics struggle to gain visibility.

The core idea is simple: when search becomes a zero-sum game where only a handful of brands receive meaningful exposure, the people capable of winning that competition become extraordinarily valuable.

Search engine optimization was once treated as a supporting marketing function. It generated useful traffic, but rarely shaped the strategic direction of a company. That assumption is increasingly outdated. Organic search still drives roughly **53% of all trackable website traffic** according to BrightEdge research, meaning the professionals responsible for search visibility are influencing one of the largest acquisition channels in modern business. When a single ranking page can determine which company captures the majority of inbound demand, the discipline responsible for that outcome stops being a tactical marketing task and starts looking much more like a strategic growth function. This shift helps explain why elite search strategists are increasingly valuable operators in digital marketing. When the difference between mediocre SEO and exceptional SEO can influence millions of dollars in long‑term revenue, paying extraordinary compensation for the professionals capable of producing those outcomes begins to make rational business sense.

SEO Is No Longer Just a Tactical Role

The era where SEO could be treated as a narrow, tactical checklist is over. Modern SEO sits at the intersection of data analysis, editorial judgment, technical infrastructure, product thinking, brand strategy, and digital public relations, and each of those layers can be the difference between visibility and invisibility. The number of decisions that influence search performance has expanded dramatically, and the consequences of getting them wrong are now measured in lost demand and lost revenue, not just a few missed rankings. When organic search is responsible for a material share of discovery, the people responsible for that visibility are not performing a minor marketing task. They are shaping growth.

This transformation has fundamentally changed what the role requires. A senior SEO strategist must understand how search engines evaluate expertise and authority across an entire domain, not just how individual pages are optimized. They must be able to interpret large volumes of data from analytics platforms, keyword research tools, and search console metrics while still exercising editorial judgment about what information actually deserves to rank. They must think about site architecture, internal linking, and content ecosystems in ways that resemble product design as much as traditional marketing.

In practice, the modern SEO operates as a hybrid professional. Part analyst, part editor, part technical architect, and part strategist, the job requires synthesizing information from multiple disciplines to make decisions that affect long‑term search visibility. It also requires something that tools alone cannot provide: judgment. The data used by SEO professionals is widely accessible. Competitors can see the same keyword volumes, the same backlink metrics, and the same ranking pages. The advantage rarely comes from possessing information that others cannot access. It comes from interpreting that information better.

As the competitive environment has intensified, this interpretive layer has become the real differentiator. When dozens of companies can see the same search opportunities, the winners are usually the teams capable of combining data with creativity, editorial depth, and strategic patience. The job therefore becomes less about executing tasks and more about designing systems that accumulate authority over time.

The Shrinking Real Estate of Search

At the same time that the role has become more complex, the available space in search results has become more competitive. Traditional search pages once displayed a relatively straightforward list of results. Ranking first meant appearing at the top of a list that still offered meaningful visibility to many of the results beneath it. Today the search landscape looks very different. AI‑generated summaries, featured snippets, knowledge panels, video results, and a range of other interface elements now compete for attention on the page.

The result is that fewer websites receive meaningful traffic from many queries. Research from SparkToro and Datos suggests that roughly **58.5 percent of Google searches now end without a click**, meaning users find the information they need directly within the search interface itself. Even when clicks do occur, the distribution is heavily concentrated at the very top of the results. Large‑scale click‑through research from groups such as Backlinko and Ahrefs consistently shows the first organic result capturing a disproportionate share of clicks, reinforcing how concentrated visibility has become at the very top of the search results.

For businesses competing in organic search, competition in search has intensified an already zero‑sum contest. In many cases there is effectively one dominant winner: the page that captures the top organic position or becomes the trusted source cited in a search engine’s AI‑generated answer. Everyone else receives a dramatically smaller share of the audience.

This dynamic has important implications for how SEO work is approached. When ranking well for a critical keyword can drive a significant portion of a company’s inbound demand, the strategy behind that ranking cannot be treated casually. A single successful page can generate thousands of qualified visitors every month, while a slightly weaker competitor may receive only a fraction of that traffic despite targeting the same audience.

Imagine a business operating in a competitive software category where thousands of potential customers search for a particular solution each month. If one company consistently captures the top organic position for those queries, it can effectively dominate the early stages of the buying journey. Competitors may still exist, but they are competing for the smaller share of visibility that remains. Over time this advantage compounds as the leading result attracts more links, more mentions, and stronger brand recognition.

This is the economic reality that has elevated SEO from a mid‑level marketing function to a senior strategic discipline. The decisions made by search professionals influence whether a business captures this compounding advantage or watches competitors accumulate it instead.

As a result, organizations increasingly expect their SEO leaders to operate at a level similar to senior strategists in other growth functions. They must understand not only how search engines work, but also how businesses grow, how audiences research products, how reputation signals develop across the web, and how content ecosystems build authority around complex topics. The individuals capable of connecting all of these elements are rare.

Another factor complicating modern SEO is the changing composition of web traffic itself. Over the past fifteen years the share of automated traffic interacting with websites has grown dramatically as search crawlers, indexing systems, and AI training agents increasingly scan the web. Reports from cybersecurity and traffic analysis firms regularly show automated traffic representing a substantial portion of internet activity. Imperva’s Bad Bot Report has repeatedly found that automated traffic represents a large share of overall web activity, reinforcing how modern SEO now operates across two audiences simultaneously: humans evaluating information and machines evaluating credibility. For search strategists this means optimization happens across both audiences at once. The modern SEO is therefore not only writing for readers but also engineering signals that help search systems trust, interpret, and recommend information across traditional results pages and AI-driven search environments.

And when they succeed, the value they create can be extraordinary.

SEO that moves rankings

Want help turning SEO traffic into leads, not just pretty reports?

This post is in SEO, so here’s the most relevant next step if you want help applying it.

We build practical SEO systems around content, technical fixes, internal links, and conversion intent so rankings actually help the business.

- Technical SEO, on-page improvements, and content strategy

- Local SEO, link building, and entity-focused optimization

- Clear execution instead of vague SEO theater

The Specialist vs Generalist Debate in SEO

One of the most persistent debates inside the search industry revolves around whether great SEO practitioners are specialists or generalists. At first glance the answer appears straightforward. SEO involves technical audits, content strategy, analytics, link acquisition, and product-level thinking about site architecture, which would seem to suggest a team of specialists is required. In practice, however, the individuals who consistently produce the strongest results tend to operate as something closer to hybrid strategists. They understand the mechanics of each discipline well enough to guide decisions across all of them, even if execution ultimately involves multiple contributors.

The reason this hybrid model has emerged is simple. Search performance is rarely determined by a single factor. A technically flawless website can fail to rank because it lacks authority. A well-written article can fail because the surrounding domain has no reputation signals. A site with strong backlinks can still struggle if its content ecosystem does not demonstrate real expertise on the topic it claims to cover. The professional responsible for diagnosing these problems therefore cannot think like a narrow technician. They must be capable of seeing the entire system and understanding how each piece influences the others.

The Hybrid Skill Set of Modern SEO

The modern SEO strategist operates at the intersection of several disciplines that historically lived in separate departments. Data analysis is one of them. Interpreting keyword demand, search intent patterns, traffic distribution, and click-through behavior remains a core part of the job. But raw data rarely tells the full story. Two websites can target the same keyword and apply the same on-page optimization principles, yet produce very different outcomes depending on how well they demonstrate credibility and expertise on the subject.

This is where editorial judgment enters the picture. A strong SEO must think like an editor who understands how information should be structured, expanded, and supported with evidence. Search engines increasingly reward content that demonstrates depth, clarity, and trustworthiness, which means SEO work overlaps heavily with content strategy and publishing decisions. The strategist guiding this process must be able to evaluate whether a page genuinely contributes something valuable to the topic or simply repeats what already exists elsewhere on the internet.

Technical thinking is another layer. Site architecture, crawl efficiency, internal linking, and page performance influence how easily search engines can understand and trust a domain. These factors rarely operate in isolation. A technically optimized site without meaningful topical authority may still struggle to compete, while a strong editorial presence can sometimes outperform technically perfect competitors. The strategist’s role is to understand where technical improvements will meaningfully affect visibility and where effort is better invested in reputation, authority, or content depth.

Finally, there is an often overlooked element of the role: digital public relations. Links, citations, and brand mentions across the web act as credibility signals that search engines use to evaluate whether a source deserves visibility. Earning those signals requires outreach, storytelling, and relationship-building with publications and communities. In that sense, elite SEO professionals frequently operate closer to strategic communicators than traditional technicians.

Why Cheap SEO Retainers Rarely Work

This complexity creates a practical problem for businesses that approach SEO as a low‑cost marketing service. Many organizations assume that hiring an agency on a small monthly retainer will produce meaningful organic growth. The reality is usually more nuanced. Low‑priced SEO services are not necessarily dishonest or incompetent. In many cases they simply reflect the economic reality of the market: when budgets are small, the amount of senior strategic attention that can be applied to the problem is also limited.

The economics of agency work make this difficult to avoid. Senior SEO strategists command high salaries because the number of professionals capable of operating across analytics, technical architecture, editorial strategy, and digital PR is relatively small. Industry salary surveys from sources such as Semrush and marketing recruitment firms consistently show experienced SEO leads earning well into six‑figure ranges, with top strategists commanding far more in consulting arrangements. Agencies therefore allocate their most experienced operators to the accounts that can support that level of involvement.

When a company spends only a few thousand dollars per month on SEO, the work is typically performed by capable but more junior practitioners executing a defined set of tasks. Those tasks might include technical audits, page optimization, internal linking improvements, or basic link outreach. None of these activities are inherently wrong, and many agencies deliver them professionally. The limitation is strategic bandwidth. Without experienced oversight guiding where effort should be concentrated, these activities often fail to generate the level of authority required to compete in difficult search environments.

This is why the results from low‑budget SEO engagements can feel underwhelming. A site may score well inside optimization tools. On‑page analyzers might show green indicators for keyword usage, internal linking, and meta tags. Domain authority metrics might even improve slightly after a few backlinks are acquired. Yet the site still struggles to reach the top of competitive search results because those indicators are only proxies for quality rather than guarantees of it.

When the goal is real authority in a crowded market, businesses often need placements strong enough to move competitive terms rather than just decorate a report. That is exactly where our DA 50+ guest post package fits for high-stakes campaigns.

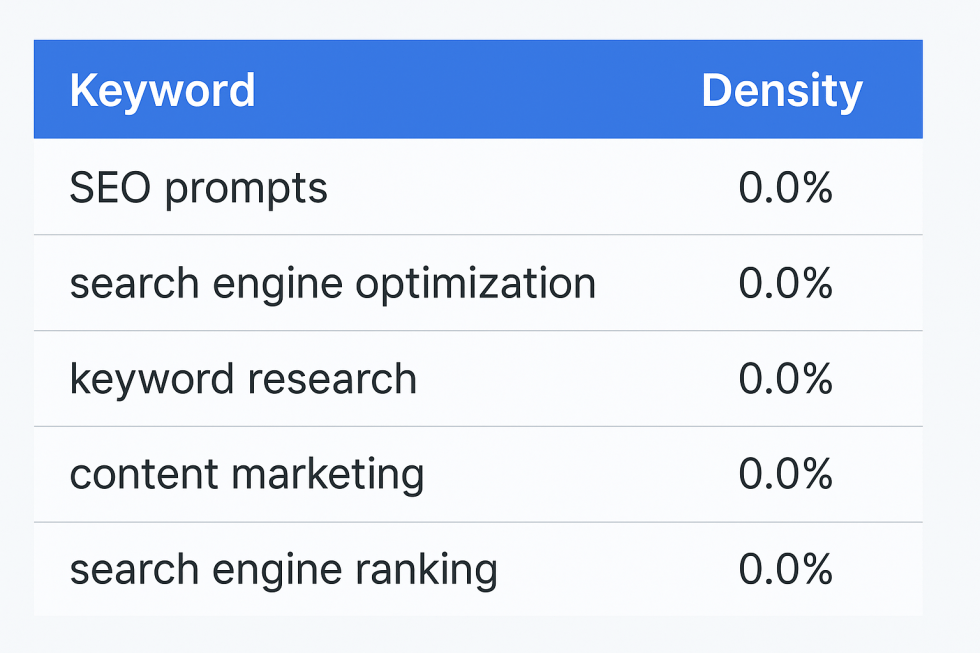

Consider a common scenario seen across many industries. A business invests time ensuring that every page satisfies the recommendations of popular SEO plugins or audit tools. Technical errors are resolved, headings are structured correctly, and keyword density looks ideal. Despite this effort the site remains stuck below competitors who appear, on the surface, to have similar optimization. The difference is usually authority and reputation. The competitors may have stronger editorial coverage of the topic, deeper real‑world experience behind the content, or years of citations from trusted publications that search engines interpret as credibility signals.

In highly competitive niches this dynamic becomes even more pronounced. When multiple companies are optimizing their sites using the same publicly available tools and datasets, the real advantage comes from the strategic decisions made on top of that information. Knowing which topics deserve deeper investment, which pages should attract external citations, and how to build entity‑level reputation across the web requires judgment that cannot be automated by software alone.

This does not mean lower‑cost SEO providers are acting in bad faith. More often it simply reflects a gap between the resources applied to the problem and the difficulty of the competition being faced. Businesses spending two thousand dollars per month on SEO should not realistically expect the same outcomes as companies investing in senior strategists whose work may influence millions in annual revenue. In a competitive, zero‑sum environment, the practitioners capable of producing those outcomes tend to work where their expertise is valued accordingly.

Understanding this distinction is critical for organizations evaluating their own search strategy.

The “Do We Deserve to Rank?” Test

When I’m working on a difficult SEO problem, the first question I ask myself — or the first question I ask a junior on my team — is brutally simple: do we actually deserve to rank? Before blaming algorithms, backlinks, or technical issues, we look at the pages already winning the search results and ask whether our content is genuinely better. Are we saying something new, or are we rewriting what already exists with different headings? Are we clearer, more specific, and more useful for the reader who is actually searching? If the honest answer is no, then the ranking problem isn’t mysterious at all. We simply haven’t produced the best result yet.

This sounds obvious, but teams rarely evaluate their own work honestly. SEO tools encourage people to focus on checklists: keyword placement, heading structures, internal links, readability scores, and so on. Those signals can be helpful, but they are not the real competition. The real competition is the other pages already ranking in the search results, and many of them are there for a reason. When you compare your content side-by-side with the top results and realize they are deeper, clearer, or more credible than what you have produced, the correct response is not frustration with Google. The correct response is to make the page better.

This diagnostic matters because search is a zero-sum environment. There are only a handful of meaningful positions in the search results, and only one of them is the top result. Large-scale click-through studies from organizations like Backlinko and Ahrefs consistently show that the first organic result captures roughly 27–30 percent of all clicks, while visibility drops sharply further down the page. In other words, if ten companies want to rank for the same query, nine of them are going to lose most of the available attention. Google does not owe anyone a ranking, and simply publishing a page on a topic does not guarantee visibility.

Taken together, these numbers reveal something most businesses underestimate about search competition. Large‑scale research from Ahrefs suggests that only about **1.74% of newly published pages reach the top 10 results within their first year**. In blunt terms, that means the overwhelming majority of content created on the internet never reaches meaningful search visibility during its first year of existence. And even reaching the top ten does not mean capturing attention. Click‑through studies consistently show that the vast majority of user clicks concentrate in the first few positions on the page. In practical terms, the percentage of content that reaches truly dominant search visibility is likely closer to one percent of everything published. That level of scarcity explains both the intensity of competition in organic search and why the strategists capable of consistently winning it are so valuable.

This reality explains why the “deserve to rank” test is so useful. The internet is flooded with competent but interchangeable articles that repeat the same explanations, the same keyword structures, and the same surface‑level advice. Search engines are constantly evaluating which sources add genuine clarity or expertise to a topic and which ones simply echo what already exists. When you realize that millions of new pages are published every day, it becomes easier to understand why the default outcome for most content is invisibility. Ranking well requires producing something meaningfully better than the alternatives already competing for the same audience.

Once teams adopt the “deserve to rank” test, the direction of the work changes quickly. Instead of asking why the algorithm isn’t rewarding their page, they begin asking how the page could become the obvious best result on the topic. That might mean expanding the article significantly, incorporating original insights, documenting real-world experience, or reorganizing the information so that it becomes dramatically easier for readers to understand. The goal becomes creating a piece of content that clearly wins a side-by-side comparison with the existing results rather than one that merely satisfies the recommendations of SEO plugins.

Occasionally the opposite conclusion emerges. After carefully reviewing the search results, you might realize that your content genuinely is stronger than what currently ranks. The explanation may be clearer, the sources more credible, or the structure significantly easier for users to follow. When that happens, the problem is rarely the content itself. The problem is authority. The page may live on a younger domain, lack the external citations that signal credibility to search engines, or sit inside a content ecosystem that has not yet built topical authority around the subject.

This distinction between content quality and authority signals is where senior SEO strategy begins to matter. If a page does not deserve to rank yet, the task is editorial improvement. If the page already deserves to rank but lacks authority, the task becomes reputation building through links, brand mentions, and broader topical coverage. The strategist’s job is to diagnose which of those two problems actually exists. Without that clarity, teams often waste months optimizing details that were never the real barrier to ranking in the first place.

The uncomfortable truth is that many companies skip this analysis entirely. They assume that hiring an SEO agency or installing optimization tools should automatically produce rankings. But in a competitive, zero-sum search environment, results are usually earned by the teams willing to ask the harder question first. Before worrying about tactics, they ask whether their work truly deserves to win.

When the answer is yes but the visibility still is not there, the next step is usually authority-building and strategic execution rather than more surface-level tweaking. Our low-traffic SEO case study is a good example of what that recovery path can look like in practice.

Topic Expertise Beats Keyword Optimization

One of the biggest misunderstandings about modern SEO is the belief that rankings are primarily determined by keyword optimization. Keyword research tools are valuable, but they provide the same datasets to every competitor targeting a topic. When dozens of companies are analyzing identical search volumes, identical SERP snapshots, and identical keyword suggestions, the data itself rarely creates the advantage. What determines the outcome is how well a team understands the topic behind those keywords and whether they can produce information that genuinely advances the conversation.

Search engines increasingly reward what can be described as topic authority. Instead of evaluating a page in isolation, modern ranking systems look for evidence that a website consistently demonstrates expertise across a subject area. This includes the depth of coverage across related topics, the relationships between articles within a content ecosystem, the credibility of the sources being cited, and the reputation of the entity publishing the material. A site that publishes a single article about a topic will almost always struggle to compete with a publication that has spent years building a library of interconnected resources explaining the subject from multiple angles.

That idea is also where “EEAT” stops being an acronym and starts being a practical publishing standard. Google’s Search Quality Evaluator Guidelines spell out Experience, Expertise, Authoritativeness, and Trust as the lens raters use when judging whether content deserves visibility, and the shortcut interpretation is simple: can a reader tell who is speaking, why they should be believed, and what real-world exposure shaped the advice? In practice, “Experience” looks like first-hand examples, original screenshots, field photos, or real workflows rather than generic summaries. “Expertise” shows up as correct terminology, sensible prioritisation, and the ability to explain trade-offs without hand-waving. “Authority” and “Trust” are earned through citations, consistent coverage over time, and the kind of reputational signals that other credible sites and communities attach to your brand.

Large-scale correlation studies reinforce this pattern. Research from organizations such as Ahrefs and Backlinko repeatedly shows that first-page search results tend to demonstrate deeper topic coverage than the average page. One widely cited analysis found that the typical page ranking on the first page of Google contains roughly 1,447 words of content, suggesting that high-ranking pages often invest more effort in explaining a topic thoroughly. Length alone does not guarantee quality, but it reflects a broader pattern: pages that explore a topic comprehensively tend to outperform those that only address it superficially. Ahrefs has also reported that a majority of pages ranking in the top 10 are not new, with many being more than three years old, which is another way of saying that search engines often reward durable, proven topic coverage rather than freshly published rewrites. When you consistently publish depth over time, you are not just trying to win a single keyword—you are training the web to treat your domain as the obvious place to learn that subject.

These studies also repeatedly highlight another reinforcing signal: the number of unique domains referencing a page or site. Large‑scale analyses from Ahrefs and Moz consistently show that pages with a higher number of referring domains tend to rank more strongly in competitive search results, which reinforces how topic authority and external reputation signals compound together over time.

Keywords vs Topics

A more effective approach begins by studying the broader topic behind the query. What questions do people ask before they type the keyword? What real-world situations lead them to the problem? What information is missing from the current search results? These questions shift the focus from mechanical optimization to genuine expertise. Instead of producing a page that simply mirrors existing articles, the goal becomes creating a resource that actually improves the quality of information available to searchers.

This distinction matters because search engines are constantly evaluating whether a page adds value to the ecosystem of information surrounding a topic. If an article merely repeats what already exists, there is little reason for a search engine to surface it above the existing results. But if the page clarifies confusing advice, introduces practical insights, or documents real-world experience, it becomes a stronger candidate for visibility.

The Field Research Advantage

One of the most reliable ways to create this kind of expertise-driven content is surprisingly simple: spend time with the people who actually do the work being described. Consider a hypothetical example involving a plumbing business trying to build search visibility. A conventional SEO workflow might begin with keyword research identifying phrases such as “how to fix a leaking sink” or “why is my drain clogged.” An inexperienced strategist might then commission generic articles explaining common repair steps already documented across hundreds of competing websites.

A more experienced strategist would approach the situation differently. Instead of relying solely on keyword tools, they might spend time observing the plumber’s daily work. During a ride-along they would hear the language customers use when describing problems, see the diagnostic process professionals follow, and watch how different tools are used to resolve issues quickly and safely. Those observations reveal details that keyword research alone cannot capture.

From that field research, entirely new content opportunities emerge. Articles could explain the most common mistakes homeowners make when attempting repairs, document the specific tools professionals rely on to diagnose hidden leaks, or show step-by-step photographs of real repair scenarios. These insights are difficult for competitors to replicate because they originate from practical experience rather than desk research. Over time, publishing this type of material builds a reputation for expertise that search engines increasingly reward.

The strategic implication is clear. Keyword research identifies opportunities, but topic expertise determines whether a site deserves to rank for them. When search engines decide which pages to surface for complex queries, they increasingly favor sources that demonstrate real knowledge, practical experience, and a consistent record of contributing valuable information to the subject area. In competitive search environments, that difference often determines which pages quietly disappear and which become the trusted references cited across the web.

Backlinks Still Matter. But Only the ones that play hard to get

Backlinks remain one of the most widely discussed signals in search engine optimization, yet they are also one of the most misunderstood. At a basic level, a backlink functions as a vote of confidence from one website to another. When a publication references a page as a source, it is effectively telling search engines that the information deserves attention. Decades of large‑scale correlation studies from organizations such as Ahrefs, Moz, and Backlinko continue to show a strong relationship between the number of referring domains pointing to a page and its ability to rank competitively in search results. That relationship does not mean links are the only factor that matters, but it does confirm that external validation still plays a central role in how search engines evaluate credibility.

The complication is that the web has become saturated with easy links. Directories, automated guest post marketplaces, private blog networks, and other low‑barrier publishing environments make it possible for almost anyone to accumulate backlinks with enough persistence. From a search engine’s perspective, however, these signals are weak because they do not represent meaningful editorial judgment. If a link can be created quickly, cheaply, and at scale, it provides little evidence that the destination page is genuinely trusted. This is also why tactics that appear legitimate on the surface, such as mass press‑release distribution, rarely produce meaningful SEO value. Press releases can be useful for communicating news, but when they are used primarily as a way to manufacture backlinks across large distribution networks they often fail to meet Google’s spam guidelines and are typically ignored as ranking signals. In practice, if a link is easy to obtain at scale, it is usually not the kind of signal that meaningfully improves search visibility. In many cases, press‑release link schemes fail to provide meaningful SEO value and can directly violate Google’s spam guidelines.

This leads to a simple but important principle that experienced SEO practitioners understand instinctively: the easier a link is to obtain, the less valuable it tends to be. High‑value links are difficult precisely because they require editorial scrutiny. A journalist citing your research, a respected industry blog referencing your analysis, or a well‑maintained resource page recommending your content all represent signals that someone made an intentional decision to reference your work. Those links carry weight not because they are technically different, but because they reflect genuine recognition from another trusted source.

Another misunderstanding in modern link building comes from the way many practitioners evaluate the quality of potential backlinks. Inside SEO communities it is common to hear questions such as “What is the domain rating?” or “What is the SEMrush authority score?” before any deeper discussion about the site itself. These metrics can be useful directional indicators, but they are ultimately proprietary scores generated by third‑party tools. They were designed to approximate search engine behavior, not to represent the actual economic value of a link. When link decisions are driven primarily by these numbers, teams can end up optimizing for a tool’s scoring system rather than for the business outcome that SEO is supposed to produce.

More experienced strategists tend to evaluate link opportunities very differently. Instead of focusing on a single authority metric, they ask questions that are much closer to revenue reality. Does the site receive meaningful organic traffic? Is it topically relevant to the subject being discussed? Does the publication have an engaged audience that actually reads and shares its content? These signals are harder to compress into a single score, but they are far more predictive of whether a link will contribute to real visibility and trust over time. It is not uncommon to find websites with relatively modest domain‑rating scores that generate significant organic traffic, while other domains with impressive metrics attract little audience attention at all.

In the emerging era of AI‑assisted search, even deeper signals are beginning to matter. Some advanced practitioners now monitor patterns such as how frequently large language model crawlers access a domain, which can sometimes be observed through server or DNS‑level traffic analysis. High crawl frequency from AI systems can indicate that a source is repeatedly consulted when models gather information from the web. While this type of signal sits well outside the metrics tracked by most SEO tools, it reflects the broader shift happening across search: algorithms increasingly evaluate reputation and information trustworthiness in ways that cannot be reduced to a single score.

It is also worth remembering how dramatically the link landscape has changed since the early days of SEO. Years ago, search engines were far more vulnerable to manipulation through spam links, which even enabled tactics such as negative SEO where competitors attempted to harm a site’s rankings by pointing low‑quality links at it. Those loopholes were gradually closed as search engines improved their ability to ignore low‑trust signals and focus on the links that actually represent editorial endorsement. Today the emphasis is far less about counting every link and far more about identifying the small number that reflect genuine credibility.

The practical implication is simple: authority cannot be reduced to a metric inside a dashboard. Domain rating, authority scores, and similar numbers can be helpful heuristics, but they are only approximations of how search engines interpret reputation. The professionals who consistently win in search tend to treat those metrics as rough indicators rather than decision‑making rules. They focus instead on the harder question: whether a link comes from a source that people and algorithms alike would reasonably trust.

For pages that already pass the “deserve to rank” test discussed earlier, these authority signals can make the difference between stagnation and visibility. It is common to encounter situations where a piece of content is genuinely better than the competing pages but still struggles to reach the top results because the surrounding domain lacks reputation signals. Strategic link acquisition in these cases functions less like manipulation and more like distribution. The goal is to ensure that strong work receives the recognition necessary for search engines to treat it as a credible source.

One of the most reliable approaches in these situations is identifying opportunities where links already exist but the underlying references have decayed. Broken‑link outreach, for example, involves finding pages that reference resources which no longer exist and offering a high‑quality replacement. Because the site owner already intended to cite information on that topic, suggesting a credible alternative is often welcomed rather than resisted. When executed responsibly, tactics like this operate less as link building and more as maintenance of the web’s information ecosystem.

The broader point is that links still matter, but not in the simplistic way they were often described a decade ago. Search engines are no longer counting links indiscriminately; they are interpreting them as signals of trust and reputation within a network of sources. For businesses attempting to compete in organic search, the challenge is not simply acquiring backlinks but earning the kind of references that indicate real credibility. In a web flooded with easy signals, the links that actually influence rankings are usually the ones that were hardest to earn.

Brand Mentions, Entities, and the New Reputation Layer of SEO

Backlinks remain one of the most visible signals of credibility on the web, but modern search engines evaluate reputation through a much broader lens than links alone. Over the past decade, Google has invested heavily in systems designed to understand entities — the people, companies, brands, and organizations behind information published online. Instead of simply indexing pages, search engines now attempt to map relationships between entities and determine which ones consistently demonstrate expertise within particular topics. In practical terms, reputation across the wider web now influences search visibility in ways that extend far beyond hyperlinks.

This shift reflects a broader technical evolution inside search engines themselves. Google’s Knowledge Graph alone is estimated to contain more than 500 billion facts about over 5 billion entities, illustrating how heavily modern search relies on mapping relationships between people, organizations, and topics rather than simply indexing isolated documents.

Google has described this evolution as the shift from "strings to things" — moving from simply matching keywords in documents to understanding the real‑world entities behind those words. In practical terms, this means search engines increasingly evaluate who is providing information, how often that entity is referenced across the web, and whether credible sources consistently associate that entity with a particular topic. For SEO strategists, this represents a fundamental change in how authority is built: ranking well is no longer only about publishing optimized pages, but about establishing a recognizable entity that search systems learn to trust.

For experienced SEO strategists, this shift introduces an additional competitive layer that many organizations still overlook. Links are easy to measure, which is why the industry often treats them as the primary signal of authority. Entity reputation is far less visible because it develops through a web of subtle signals that traditional SEO tools struggle to capture. A brand might be mentioned regularly in forums, referenced in newsletters, discussed in communities, cited in conference talks, or quoted in media coverage without those references always including a link. From a human perspective these signals clearly indicate influence and expertise. Modern search systems increasingly attempt to interpret those same signals algorithmically.

Google Ranks Entities, Not Just Pages

Understanding modern search requires recognizing that Google is no longer just ranking pages. It is attempting to understand the entities behind those pages. Systems such as the Knowledge Graph connect brands, organizations, authors, and topics into a network of relationships that help search engines determine credibility. When a brand repeatedly appears in credible discussions about a subject, the algorithm begins associating that entity with expertise in that area. In effect, the search engine is asking the same question that humans ask when evaluating advice: who is speaking, and why should we trust them?

This is why two pieces of content covering the same topic can perform very differently even when their technical SEO appears similar. One article may sit on a domain that search engines already associate with the topic through years of coverage, citations, and references across the web. Another may come from a newer site that has produced a well-written article but lacks a broader reputation footprint. Even if the information is accurate in both cases, the entity with the stronger reputation is far more likely to receive visibility because search engines have greater confidence in the credibility of that source.

The evolution of search queries reinforces this behavior. Research from organizations such as Semrush shows that queries are becoming longer and more conversational as users increasingly search in natural language. When people ask nuanced or situational questions, search engines must interpret intent rather than simply matching keywords. In those situations, algorithms often favor sources that appear to possess real expertise on the subject. Entity reputation becomes a critical signal for deciding which voices should be surfaced in those results.

Industry research from Semrush and other search analytics platforms shows that queries have steadily become longer and more conversational as users interact with voice assistants and AI-powered interfaces, which increases the importance of identifying trusted entities capable of answering complex questions rather than simply matching keywords.

Reputation Signals Beyond Backlinks

One of the most important consequences of entity-based search is that brand reputation across the broader web begins to influence rankings. Mentions in industry discussions, reviews on trusted platforms, editorial references in news publications, and conversations within specialist communities all contribute to the perception that a brand is credible within a particular topic. These signals do not always appear in the form of traditional backlinks, yet they still help search engines interpret whether a source is widely recognized within its field.

Consider a simple example involving a local service business competing for search visibility around a specialized repair problem. One company may have accumulated several backlinks through directories or small guest posts. Another might have fewer links but years of positive customer reviews, mentions in local publications, and active discussion within community forums. From a purely link-counting perspective the first business may appear stronger. From a reputation perspective the second business may look far more credible. Modern search systems increasingly attempt to interpret this broader context when deciding which results deserve visibility.

For SEO professionals this introduces a significant measurement challenge. Most SEO platforms excel at tracking backlinks, keyword rankings, and technical metrics. They are far less capable of capturing unlinked brand mentions, community conversations, or the broader narrative surrounding a brand across the web. Some of the most important signals influencing modern search visibility therefore sit outside the dashboards that marketers rely on every day.

This difficulty is precisely why entity reputation has become such a powerful differentiator. Building a credible presence across editorial publications, communities, and industry discussions requires sustained expertise and participation that cannot be automated through a single tactic. It develops gradually through consistent contributions, useful insights, and the willingness of other people to reference your work. Over time those signals reinforce each other. The more frequently a brand is mentioned in credible contexts, the easier it becomes for search engines to interpret that brand as a trusted entity within the topic ecosystem.

In practical terms, this means that the competition for search visibility now extends far beyond optimizing individual pages. Winning in modern search increasingly requires building a recognizable presence across the entire information environment surrounding a topic. Brands that consistently contribute knowledge, earn editorial coverage, and participate in meaningful conversations accumulate the reputational signals that algorithms interpret as authority.

For businesses focused only on traditional SEO metrics, this layer of competition can remain invisible until it is already influencing results. A competitor with fewer backlinks and similar on-page optimization may still outrank them simply because the broader web already treats that competitor as the more credible voice. From the algorithm’s perspective the decision becomes obvious. In an information ecosystem increasingly organized around entities, reputation is no longer optional. It is one of the quiet forces determining which sources search engines trust enough to recommend.

Why AI Did Not Kill SEO (It Made It Harder)

Few claims have circulated through the marketing industry more aggressively over the past two years than the idea that artificial intelligence has “killed SEO.” The argument usually follows a simple logic: if search engines now generate answers directly, users will stop visiting websites and the traditional mechanics of ranking will disappear. In reality, the opposite dynamic is unfolding. AI systems have not removed the need for search optimization; they have intensified the competition for credible information sources. If anything, AI has raised the bar for what content must demonstrate in order to be trusted, cited, and surfaced inside modern search environments.

This should not be surprising to anyone who has worked in search for a long time. SEO has always been the practice of understanding how algorithms interpret information and positioning content so that those systems can recognize its value. Large language models, conversational search interfaces, and AI‑generated summaries represent a change in how search engines present information, but they do not eliminate the underlying problem those systems must solve. Algorithms still need to determine which sources are credible, which explanations are accurate, and which entities have demonstrated reliable expertise on the topic being discussed.

AI Increased Competition, Not Replaced SEO

The real impact of generative AI has been an explosion in the supply of online content. Marketing teams now have the ability to produce articles, guides, and landing pages at a scale that would have been impossible only a few years ago. Surveys such as HubSpot’s State of AI in Marketing report indicate that more than 70 percent of organizations now use AI tools as part of their content production workflows. From a search engine’s perspective, this creates a dramatically larger universe of information that must be evaluated and filtered for quality.

When the supply of content increases this quickly, the mechanisms used to determine credibility inevitably become stricter. Search engines cannot simply rank every new article that appears on the web, and AI‑generated material often repeats the same publicly available explanations found across existing sources. As a result, the signals that differentiate trustworthy information become more important than ever. Depth of expertise, original insight, credible citations, and consistent reputation across the web all become stronger signals because they help algorithms separate genuinely useful material from the overwhelming volume of generic content.

This is precisely why experienced SEO professionals have not disappeared from the industry despite the rapid adoption of AI tools. If anything, the opposite has happened. As the web fills with increasingly similar content, the ability to produce work that genuinely stands out becomes far more valuable. Algorithms may be able to generate summaries, but they still rely on trustworthy sources to train on, reference, and cite when generating those summaries.

The Myth of Infinite AI Content

The assumption that AI content automatically dominates search results misunderstands how modern ranking systems evaluate information. While generative models can assemble coherent articles quickly, they rarely possess firsthand experience, original data, or the practical insights that come from working directly within a field. Search engines are increasingly tuned to detect and prioritize signals that indicate real expertise rather than surface‑level synthesis of existing material.

In practice this means that the flood of AI‑assisted content often reinforces the value of sources with genuine authority. Websites that document real-world experience, publish original research, or consistently contribute new insights to a topic tend to stand out more clearly as the surrounding information ecosystem becomes saturated with repetition. When a model encounters thousands of near‑identical explanations of a topic, the handful of sources that offer unique evidence or practical examples become easier to identify as authoritative.

This pattern mirrors what has happened repeatedly throughout the history of search. Every time new publishing technologies reduce the cost of producing content, the volume of information increases dramatically. In response, search engines evolve toward signals that are harder to fabricate at scale. Links once served that role because they required another site’s editorial judgment. Today, entity reputation, brand mentions, and demonstrable expertise increasingly play the same function.

Seen clearly, AI has not ended SEO. It has simply moved the goalposts. The competition is no longer about who can publish the most content or insert the most keywords into a page. It is about which organizations can demonstrate the clearest expertise, the strongest reputation, and the most trustworthy information within a topic ecosystem. For businesses willing to invest in that level of credibility, search visibility remains one of the most powerful distribution channels on the internet.

And for the strategists capable of building those signals consistently, the value of their work has only increased.

The Reality of Link Building in 2026

Link building remains one of the most discussed, and most misunderstood, parts of SEO. The conversation tends to swing between two extremes. One camp argues links barely matter anymore, as if rankings are now purely a function of on-page work and technical hygiene. The other camp still treats links like a numbers game, where enough placements, enough swaps, and enough “authority” metrics will eventually force the SERP to move.

In 2026, both views miss what’s actually happening. Links still matter because they are one of the cleanest external signals that a page is worth trusting, but the meaning of a link has tightened. Search engines are less interested in the existence of a hyperlink and more interested in the circumstances that produced it. A citation that shows up because an editor genuinely wanted to reference a source is interpreted differently from a link that exists because it was bought, traded in bulk, or dropped into a low-friction publishing environment.

That distinction is why guest posting still works when it is done like publishing, not like link placement. There is nothing inherently manipulative about contributing a thoughtful piece to a relevant publication, especially when it gives the host’s audience an argument, a framework, or a set of examples they would not otherwise get. In many industries this is how expertise spreads. People write op-eds, contribute commentary, and share analysis outside their own platforms, and the citations that accompany that contribution behave like any other editorial reference.

For teams that want that approach executed properly, our guest post link building service focuses on quality placements, editorial fit, and links that actually support authority instead of just inflating a spreadsheet.

It is also worth naming the obvious. This article is a guest contribution, and that is the point. A good guest post should not feel like a transaction hidden inside prose. It should feel like a coherent, useful piece that deserves to exist on the host site whether a link is present or not. When guest posting is treated as distribution for ideas, not a loophole for authority, it becomes one of the few link strategies that can remain legitimate over time.

The problem is that the industry has never lacked shortcuts. Private blog networks, automated guest-post marketplaces, large-scale link exchanges, and expired-domain recycling are all variations of the same bet: that you can manufacture the appearance of credibility faster than you can earn it. Sometimes these tactics inflate tool metrics, at least for a while. You might see a domain rating tick upward or a backlink graph look healthier. What you often do not see is revenue moving, because the underlying trust signal is thin.

Expired-domain strategies are the cleanest example. Buying a domain that once earned real citations and then repurposing it for an unrelated business can look impressive in an SEO dashboard. Over time, the mismatch becomes difficult to hide. Algorithms evaluate topic consistency, the quality of surrounding references, and whether the new entity actually belongs in the same conversation as the old one. If the only thing you inherit is the link graph, and not the relevance or the reputation that produced it, the gains tend to be temporary.

The same pattern shows up in low-value guest-post networks. When the content exists primarily to host anchors, the editorial intent is obvious. The resulting links may create noise, but they rarely create authority. Search engines have spent years learning to separate editorial endorsement from industrial-scale link placement, and that learning accelerates every time a tactic becomes popular enough to be packaged and sold.

For pages that genuinely deserve to rank, link building becomes less like manipulation and more like distribution. Strong work still has to be discovered. A good citation exposes a piece of content to real readers, journalists, communities, and other publishers who may reference it again in contexts that actually matter. That is how authority compounds in the real world, not through volume, but through repeated recognition from credible places.

This is why the most valuable links tend to come from environments with real editorial friction. Academic citations, respected industry publications, curated resource lists, and tightly moderated knowledge platforms do not link freely. They link when a source improves the quality of the information they are responsible for. From an algorithm’s perspective, that friction is the point. It is a proxy for scrutiny.

Once you see links this way, the work becomes clearer. You are not trying to win a score inside Ahrefs. You are trying to earn citations that a reasonable person would trust, and that a machine can safely treat as evidence. In the modern web, some of the hardest links to earn come from platforms where that scrutiny is almost absolute.

The Hardest Links to Earn, Are the Links That Matter

Some links are valuable because of where they sit on a page. The hardest links are valuable because of what had to happen for them to exist at all. They come from places where someone can say “no,” where an editor has a reputation to protect, and where the default posture is skepticism. In a web flooded with easy placements, that friction is the point. It is one of the few signals that still behaves like real trust.

The mistake many teams make is assuming “high authority” is a property you can buy on demand. In practice, the links that consistently move outcomes are the ones you can’t reliably manufacture. You earn them by publishing something that improves the host’s information environment, then surviving the scrutiny that comes with it. In other words, these links are not a tactic. They are a side effect of doing the work at a standard other people are willing to stake their own credibility on.

Editorial Friction Is the Signal

A link from a tightly edited publication is not just a vote. It is a record of process. Someone evaluated the claim, checked the source, decided it was safe to reference, and then attached their brand to it. That is why an editorial link often does more than “pass authority.” It becomes a piece of public evidence that your work belongs in the serious version of the conversation.

This is also why link quality has become easier to explain to non‑SEOs when you strip out the jargon. Ask a simple question: if a reader followed that citation, would they feel more informed and more confident, or would they feel like they were sold something? The more the link behaves like a genuine reference, the more it tends to behave like a ranking signal.

The same principle applies to other high-friction environments. Universities, standards bodies, regulators, and long-standing trade organizations do not link freely. They link when a source materially improves clarity or verification. Search engines understand that. When you earn citations from places with institutional caution, you are not just collecting backlinks. You are collecting credibility.

Wikipedia Links Are Hard to Get and Harder to Keep

Wikipedia is the cleanest example of editorial friction in the modern web because it is engineered to distrust you by default. A Wikipedia link is not “hard” because the form is complicated. It is hard because the community is trained to remove anything that looks self‑serving, weakly sourced, or promotional. Even when a link gets added, it is always provisional. It can be reverted by someone who disagrees, challenged by someone who wants stronger sources, or removed because it no longer meets the bar.

This is why Wikipedia links matter even though they are typically nofollow. The value is not direct link equity. The value is what the link implies about the underlying evidence. If a citation survives on Wikipedia, it usually means the content it points to was supported by stronger sources and framed in a way that made sense to impartial editors.

If you want a practical case study of what this scrutiny looks like, look at how Wikipedia handles claims about emerging industries and standards. A page cannot lean on a company’s own press release or marketing copy. It needs independent coverage, strong references, and language that reads like documentation, not persuasion. When an organization earns a Wikipedia‑grade citation through that process, it is a signal that the work can survive in an environment where the incentives are tilted toward removal.

A useful example in the Web3 credibility space is VaaSBlock’s documentation of Wikipedia’s recognition of the Risk Management Assessment (RMA), which walks through how the reference was treated and why the underlying sourcing mattered.

The lesson is not “go chase Wikipedia links.” The lesson is that the easiest way to earn a Wikipedia link is to stop thinking about Wikipedia and start thinking about independent verification. If your work is genuinely useful, properly sourced, and consistently referenced by credible third parties, Wikipedia becomes a downstream outcome, not a target.

The Links That Compound

The hardest links tend to be compounding assets because they change how other people treat your work. One credible editorial citation can lead to another because journalists, analysts, and researchers often follow the same trail of references. A strong link makes your content easier to trust. Trust makes your content easier to cite. And those repeated citations can become the reputational scaffolding that supports everything else you publish.

If that sounds slow, it is. It is also why elite SEOs get paid what they do. They are not just building pages. They are building credibility ecosystems that can survive scrutiny, earn repeat citations, and keep compounding long after a campaign ends.

The Economic Value of Great SEO

Search engine optimization is often discussed as a marketing tactic, but in mature organizations it behaves more like a long‑term growth asset. A successful SEO strategy does not simply generate traffic for a campaign window; it produces durable visibility that compounds over time. Once a page earns a strong position in search results, it can continue attracting qualified visitors for months or even years with relatively modest maintenance. Unlike paid advertising, where traffic stops the moment budgets are paused, organic visibility continues delivering demand long after the original work has been completed.

This compounding effect is one of the reasons organic search consistently represents one of the largest acquisition channels for digital businesses. Research from BrightEdge estimates that organic search drives roughly **53 percent of all trackable website traffic**, making it the single largest measurable source of discovery for many organizations. When a company consistently ranks for high‑intent queries within its industry, it effectively owns a portion of the demand already being expressed by potential customers. Each ranking page becomes a durable asset that captures attention at the exact moment people are searching for solutions.

SEO as a Compounding Growth Channel

The long‑term economics of this model can be remarkably powerful. A single well‑executed page targeting a high‑value topic may generate thousands of qualified visits every month. Over several years that traffic can represent hundreds of thousands of interactions with potential customers, many of whom would otherwise have been acquired through paid advertising. When those visits convert into leads, sign‑ups, or purchases, the cumulative financial impact can easily reach millions of dollars in attributable revenue.

This is why some of the most successful digital companies have invested heavily in organic search as a strategic channel. Businesses such as HubSpot, NerdWallet, and Zapier built enormous audiences by publishing deeply useful resources that consistently rank for topics their customers care about. In each case the companies created large ecosystems of content that answered practical questions, explained complex processes, and documented real‑world use cases in their respective industries. Over time these resources became trusted references across the web, attracting links, citations, and brand recognition that reinforced their visibility.

The result is an acquisition engine that continues operating long after the original content has been published. A page written five years ago may still generate meaningful traffic today if it remains the best explanation of a topic. Unlike most forms of marketing, the marginal cost of each additional visitor arriving through organic search is essentially zero. Once the authority has been earned, the asset continues producing value.

Why Elite SEOs Are So Valuable

The strategic importance of this compounding model helps explain why experienced SEO practitioners command such high salaries and consulting fees. A skilled search strategist does not simply optimize pages; they design systems that capture demand across entire topic ecosystems. Their decisions influence which subjects a company invests in, how authority is built across related content, and how reputation signals accumulate across the web. When executed well, those decisions determine whether a business becomes the default source of information in its category or remains invisible behind competitors.

In highly competitive industries the financial implications can be enormous. Consider a software company competing for search visibility around a product category with tens of thousands of monthly searches. If one organization consistently captures the top ranking positions for those queries, it effectively becomes the first brand many potential customers encounter. Large‑scale click‑through studies from organizations such as Backlinko and Ahrefs show that the first organic result alone can capture roughly **27–30 percent of total clicks**, which means the company occupying that position often receives a disproportionate share of the available attention.

When those visitors represent potential buyers researching solutions, the revenue impact quickly becomes measurable. A small improvement in ranking for a commercially valuable query can translate into thousands of additional visitors each month. Over time those visitors convert into leads, subscriptions, or purchases that significantly influence the company’s growth trajectory. In that context, the strategist responsible for designing and executing the search strategy is not simply performing a marketing task. They are shaping a revenue channel that compounds year after year.

This is the deeper reason elite SEO professionals are so highly valued. Their work operates on a time horizon measured in years rather than campaigns. A strong search strategy can quietly generate millions of dollars in incremental revenue over time, often without the constant budget increases required by other acquisition channels. For organizations competing in search‑driven industries, the difference between average SEO and exceptional SEO is not a small efficiency gain. It is the difference between participating in the market and dominating it.

Why Most Businesses Underestimate SEO

Despite the enormous economic value organic search can create, many businesses still approach SEO with expectations that do not match how the channel actually works. The misunderstanding usually begins with timelines. In other areas of marketing, results can appear quickly. Paid advertising can generate traffic within hours of launching a campaign, and social media posts can spread widely in a matter of days. SEO behaves differently because search engines must first observe, evaluate, and trust the signals that determine whether a page deserves visibility. That process takes time, and organizations that expect instant outcomes often conclude incorrectly that SEO “isn’t working.”

Industry research consistently reinforces this reality. Large-scale analyses from Ahrefs and other SEO research groups have shown that most pages that eventually rank well take months to reach those positions, with many requiring four to twelve months before meaningful movement appears in competitive search results. This delay is not a failure of the strategy. It is a reflection of how search systems evaluate credibility. New content must accumulate signals of usefulness, authority, and trust before algorithms are confident enough to place it above established competitors.

The Expectation Gap

The gap between expectation and reality is one of the most common sources of frustration in SEO engagements. A business might publish new pages, optimize technical elements of a website, and begin acquiring links, only to see little immediate change in rankings. From the outside it can feel as though nothing is happening. In practice, search engines are often quietly observing how those pages perform: whether users engage with them, whether other sites reference them, and whether the domain consistently demonstrates expertise across related topics.

This observation period becomes especially important in competitive niches. If a search query is already dominated by established publications with years of authority and hundreds of citations, a newly optimized page rarely replaces them overnight. Instead, visibility grows gradually as the newer site accumulates its own credibility signals. When businesses understand this process, the timeline begins to make sense. SEO is not simply about publishing content; it is about proving to search engines over time that the content deserves to be trusted.

The misunderstanding is amplified by the way SEO tools present progress. Dashboards often highlight metrics such as keyword rankings, domain authority scores, or technical audit improvements, which can give the impression that success should follow immediately after those indicators improve. In reality these metrics are only partial signals. Search engines evaluate a far wider ecosystem of reputation indicators, many of which take months or years to develop fully. Until those signals accumulate, even well-optimized pages may struggle to break into the most competitive positions.

The Retainer Reality

Another reason businesses underestimate SEO is the way the work is often purchased. Many organizations treat SEO as a small monthly service rather than a strategic investment. A modest agency retainer may fund technical maintenance, periodic content production, and some link outreach, but it rarely provides the level of senior strategic involvement required to compete in highly contested search environments.

This creates a mismatch between ambition and resources. A company may hope to outrank major competitors for commercially valuable queries while allocating only a small budget to the effort. Meanwhile those competitors may be investing heavily in editorial teams, digital PR campaigns, and long-term authority building across their domains. When viewed through that lens, the results are not surprising. Search visibility often reflects the scale of resources and expertise applied to the problem.

Consider a typical scenario in a competitive industry such as software, finance, or health. Several companies may target the same set of high-intent keywords because those queries represent potential customers actively researching solutions. One organization invests in a small SEO retainer focused primarily on technical optimization and a handful of monthly articles. Another invests in experienced strategists, subject-matter experts, digital PR outreach, and extensive editorial coverage of the topic ecosystem. Over time the second company accumulates citations, brand mentions, and deeper topical authority that gradually pushes its pages toward the top of the results.

From the outside, the difference can appear mysterious. Both companies may be “doing SEO,” yet one consistently outranks the other. The explanation is rarely a single tactic. It is the cumulative effect of strategy, expertise, and sustained investment applied over time. Search engines reward domains that demonstrate durable authority, and building that authority requires resources that extend beyond a minimal marketing line item.

This is why experienced SEO professionals often describe search as a long-term asset rather than a quick campaign. When businesses underestimate the scale of effort required, they tend to evaluate the channel too early or with insufficient investment. When they treat it as a strategic growth engine and allow authority to accumulate, the results can transform the economics of customer acquisition.

Understanding that difference is one of the most important mindset shifts a company can make when approaching organic search.

The Long-Term Compounding Value of Search

One of the most important differences between search engine optimization and most other marketing channels is how value accumulates over time. Paid advertising, social media promotion, and many forms of performance marketing behave like rented distribution. The moment the campaign stops or the budget disappears, the traffic disappears with it. SEO works very differently. When a page earns strong search visibility it often continues attracting qualified visitors for years, which means the original investment continues producing returns long after the initial work is finished.

This dynamic is the reason many of the most successful digital companies treat organic search as a strategic asset rather than a campaign. BrightEdge has repeatedly found that organic search is the largest driver of trackable traffic for many sites, which is why strong rankings behave less like a campaign win and more like an acquisition asset you own. When a company captures top rankings for high‑intent queries within its industry, it effectively owns a portion of the demand already being expressed by potential customers. Instead of constantly paying to reach those users, the business becomes the source people discover when they search for answers.

SEO Builds Durable Traffic

The durability of search traffic is what makes the channel so powerful. A page that ranks well for an important query can generate thousands of visitors every month with little additional investment beyond occasional updates. Over time those visits compound. A page that brings in two thousand visitors per month will attract twenty‑four thousand visitors over a year. If the page continues ranking for five years, that single piece of work may generate more than one hundred thousand visits, many of which represent potential customers actively researching a solution.

Studies examining ranking longevity reinforce this compounding effect. Analysis from Ahrefs has shown that a significant portion of pages ranking in the top ten results are **more than three years old**, demonstrating how durable strong search positions can be once authority is established. In other words, the work performed today can continue producing results long after the team responsible for it has moved on to new projects. Few other marketing activities behave this way. A social media post might drive engagement for a few days, and an advertising campaign may generate leads while the budget lasts, but both disappear quickly once the activity stops.

The long lifespan of strong search pages means the economics of SEO are fundamentally different from other channels. Instead of paying repeatedly to acquire the same audience, businesses invest once in building a resource that continues attracting attention. Over time the cumulative value of those visits can dwarf the original cost of producing the content. This is one of the reasons experienced growth teams increasingly treat organic search visibility as a form of digital infrastructure rather than a promotional tactic.

Search Authority Compounds Reputation

The compounding effect of SEO is not limited to traffic alone. Strong search visibility also reinforces brand reputation, which in turn strengthens future rankings. When a company consistently appears in search results for important questions within its industry, users begin associating that brand with expertise on the topic. Journalists, researchers, and industry commentators often discover sources through search results themselves, which means strong rankings can indirectly lead to media citations, conference invitations, and editorial coverage that further expand the brand’s authority.